Record Consistency Analysis Batch – Puritqnas, Rasnkada, reginab1101, Site #Theamericansecrets

Record Consistency Analysis Batch examines cross-source integrity among Puritqnas, Rasnkada, reginab1101, and Site Theamericansecrets. The approach is analytical and methodical, focusing on discrepancy detection, lineage tracing, and change auditing. It emphasizes harmonized terminology and semantic drift detection to produce reproducible validation steps. The outcome offers provenance and governance-ready records, yet unresolved gaps may still surface, inviting further scrutiny as the batch progresses with stringent controls.

What Is Record Consistency Analysis and Why It Matters

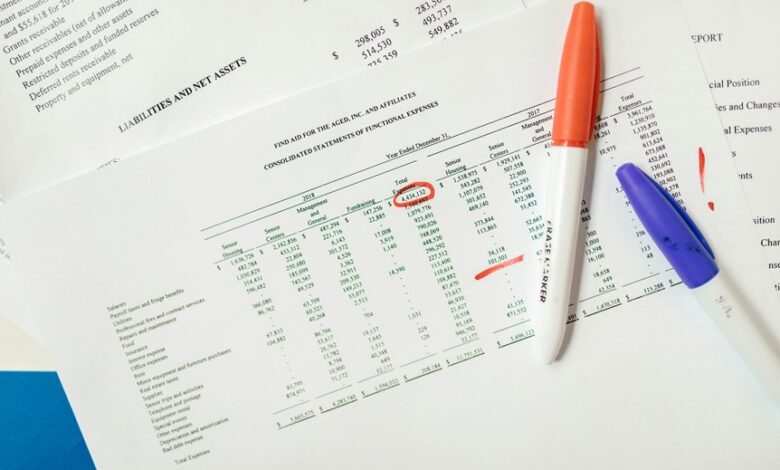

Record Consistency Analysis (RCA) is a structured process that evaluates whether data records across multiple systems and sources reflect the same information, with a focus on identifying discrepancies, gaps, and duplications.

RCA reveals how record integrity is maintained, enabling tracing of data lineage, auditing changes, and ensuring cohesive datasets.

It supports freedom through transparent, reproducible, and auditable data governance.

Detecting Inconsistencies Across Puritqnas, Rasnkada, Reginab1101, and Site Theamericansecrets

The analysis emphasizes purity assessment and synonym mapping to align terminology, detect semantic drift, and ensure cross-source coherence for a transparent, freedom-driven understanding of batch integrity and provenance.

Practical Batch Validation Techniques You Can Implement Now

Practical batch validation techniques can be implemented immediately by establishing a disciplined, stepwise protocol that emphasizes reproducibility, traceability, and objective criteria.

The analysis outlines standardized checks, parameter logging, and incremental verification culminating in tidy datasets and robust audit trails.

This approach supports objective decision-making, minimizes ambiguity, and upholds data integrity while enabling scalable, transparent validation across diverse Puritqnas, Rasnkada, reginab1101, and Site analyses.

Reading Variance, Ensuring Traceability, and Strengthening Data Reliability

Methodical examination of reading variance is essential to identify sources of inconsistency and quantify their impact on data quality. This analysis enhances traceability by documenting measurement paths and deviations, enabling transparent decision-making.

Data governance frameworks establish accountability, while audit trails provide verifiable records of changes.

Systematic reconciliation fortifies data reliability, supporting reproducibility, cross‑checking, and disciplined governance across batches and sites.

Conclusion

The record consistency analysis batch demonstrates rigorous, reproducible governance across disparate sources, delivering coherent provenance and auditable trails. By harmonizing terminology and applying objective validation steps, it surfaces discrepancies, gaps, and duplications, enabling timely remediation. For example, a hypothetical multi-site healthcare dataset reveals conflicting patient identifiers between Puritqnas and Site Theamericansecrets; systematic lineage tracing and reconciliation restore uniform records, reducing downstream errors. The approach yields transparent, reliable data ecosystems and strengthens cross-site accountability throughout the batch lifecycle.