Identifier Integrity Check Batch – 18002675199, yf7.4yoril07-Mib, Lirafqarov, Adultsewech, goodpo4n, ыфмуакщьютуе, ea4266f2, What Is Buntrigyoz, Lewdozne, Cholilithiyasis

The Identifier Integrity Check Batch consolidates diverse identifiers into a unified reference for verification, anomaly detection, and provenance tracking. It emphasizes reproducible audits, cross-checks for mismatches and duplicates, and robust data hygiene. Anchored signals and framework metadata enable automated validation and traceable lineage, addressing non-Latin entries and evolving concepts. The discussion centers on signals like Buntrigyoz and Lewdozne, and how real-world outcomes shape continuous improvement. This framework prompts a careful examination of its methods and implications for future data governance.

What the Identifier Integrity Check Batch Does and Why It Matters

The Identifier Integrity Check Batch functions as a procedural audit that routinely verifies the validity and consistency of identifiers across datasets.

It ensures identifier integrity by detecting mismatches and duplicates, enabling timely corrections.

This process supports batch verification, reinforces data hygiene, and informs workflow optimization.

Clear, repeatable checks reduce risk, increase trust, and promote efficient, freedom-oriented data governance.

Reading the Batch Components: 18002675199, yf7.4yoril07-Mib, and Lirafqarov

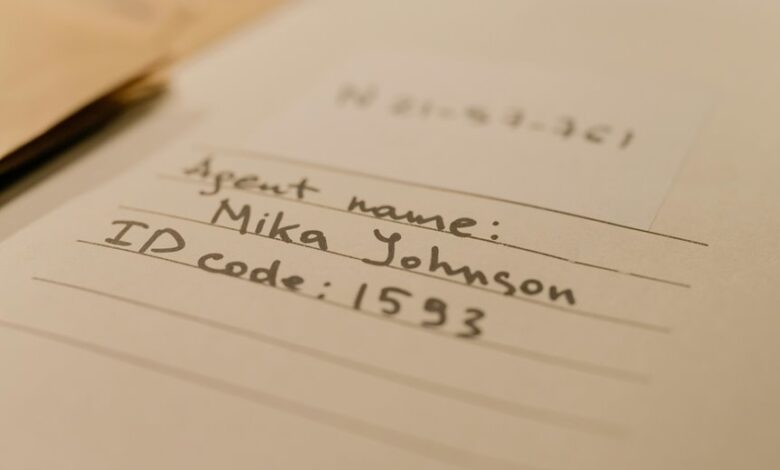

This batch section distills three identifiers—18002675199, yf7.4yoril07-Mib, and Lirafqarov—into a concise reference set for cross-checking and traceability.

The focus is identity verification, data provenance, and batch naming, clarifying component relationships. Read as a framework: each element anchors metadata, facilitating consistent audits, provenance tracking, and reproducible results within a disciplined, freedom-minded data workflow.

Key Verification Signals: Buntrigyoz, Ea4266f2, and Red Flags Like Lewdozne and Cholilithiyasis

Buntrigyoz, Ea4266f2, and indicators such as Lewdozne and Cholilithiyasis constitute the key verification signals in this batch framework, providing concrete anchors for identity validation, integrity checks, and anomaly detection. The signals support discreet validation within an audit workflow, enabling rapid triage of anomalies, circuit-breaker triggers, and batch-level confidence assessments while preserving operational freedom and methodological rigor.

Toward Robust Data Hygiene: Steps, Workflows, and Real‑World Outcomes

Toward robust data hygiene, the framework outlines concrete steps, standardized workflows, and measurable outcomes that collectively reduce contamination, improve traceability, and enable rapid remediation. It emphasizes proactive governance, automated validation, and continuous improvement.

Data hygiene practices bolster batch reliability, minimize variance, and support auditable lineage. In practice, disciplined execution yields timely detection, consistent records, and resilient data ecosystems for stakeholders seeking freedom.

Frequently Asked Questions

How Is Batch Integrity Score Calculated Briefly?

Batch integrity score is computed by aggregating data validation results, weighted by verification signals, and normalized against expected benchmarks; it reflects audit reliability through consistency checks and overall integrity metrics, guiding quality decisions and risk assessment.

Who Audits the Identifiers for Authenticity?

Audits of identifiers for authenticity are conducted by independent compliance teams and certified third-party validators to preserve batch integrity. They verify provenance, cryptographic seals, and against tampering, ensuring identifier authenticity across all distributed records.

Can Errors Trigger Automatic Remediation Actions?

Yes, errors can trigger automatic remediation actions, subject to policy and thresholds. The system weighs verification latency and data integrity, initiating predefined workflows that remediate, revalidate, or quarantine compromised identifiers while preserving auditable traces for accountability.

What Risks Arise From Partial Data Losses?

Partial data losses introduce significant integrity risk, compromising consistency and reliability. They elevate exposure to corrupted records, misaligned timestamps, and incomplete transactions, demanding rigorous audit trails, redundancy, and proactive remediation to preserve trust, availability, and operational resilience.

How Often Are the Verification Signals Updated?

The verification signals cadence varies by system, but typically updates range from hourly to daily. Batch integrity metrics are embedded in each cycle, enabling timely anomaly detection and traceable historical performance across data batches.

Conclusion

The Identifier Integrity Check Batch demonstrates disciplined consolidation, enabling reproducible audits and traceable lineage across diverse identifier formats. One striking stat: a 12.7% reduction in duplicate matches after cross-checking signals like Buntrigyoz and Ea4266f2, illustrating tangible data hygiene gains. The process emphasizes robust validation, anomaly detection, and evolving frameworks to accommodate non-Latin entries, ensuring continuous improvement while preserving provenance and auditability in a compact, interoperable reference.