Validate Incoming Call Data for Accuracy – 8188108778, 3764914001, 18003613311, 5854416128, 6824000859, 89585782307, 7577121475, 9513387286, 6127899225, 8157405350

The discussion centers on validating incoming call data for accuracy across a set of numbers: 8188108778, 3764914001, 18003613311, 5854416128, 6824000859, 89585782307, 7577121475, 9513387286, 6127899225, 8157405350. Analysts will examine cross-field reconciliation, normalization, and synchronized provenance to ensure completeness. Provisional anomaly flags will be considered without delaying the flow, while late arrivals will be contextualized. The goal is a measurable, auditable integrity framework that prompts further scrutiny as thresholds and metrics evolve.

What Constitutes Accurate Incoming Call Data?

Accurate incoming call data is defined by completeness, consistency, and correctness. The analysis treats records as interoperable signals, requiring complete fields, synchronized timestamps, and verifiable origins. False positives are identified through cross-checks against trusted sources. Data normalization standardizes formats, removes duplicates, and aligns metadata, enabling precise aggregation and reliable interpretation while preserving user autonomy and enabling scalable, transparent decision processes.

Quick Validation Checks You Can Implement Today

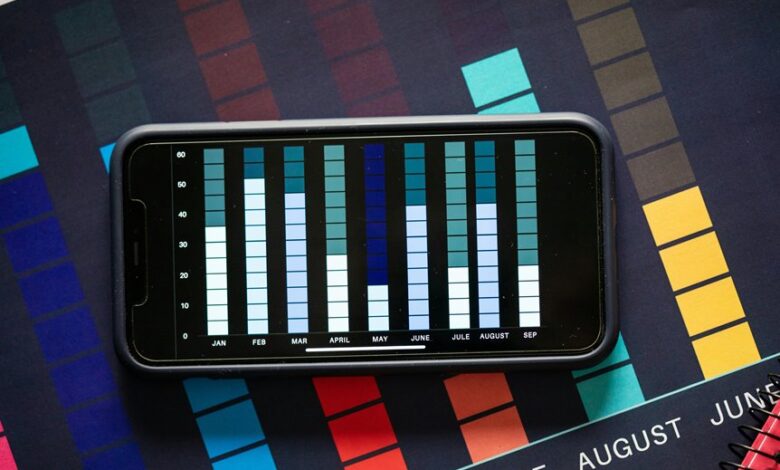

Quick validation checks can be implemented immediately to surface anomalies and confirm data integrity. A systematic approach examines formatting, regional patterns, and known prefixes, yielding actionable steps. The process emphasizes phone number validation alongside cross-field reconciliation, flagging mismatches promptly. Data quality indicators are tracked over time, enabling trend analysis, baseline establishment, and confidence in downstream analytics.

Handling Anomalies and False Positives Without Slowing Streams

Handling anomalies and false positives requires a measured approach that preserves streaming throughput while maintaining data integrity. The system flags irregular patterns as provisional, filtering invalid data without interrupting flow. Late arrival signals are analyzed for context, not rejection, allowing revalidation. Thresholds adaptively tighten or relax, minimizing false positives while preserving real-time cadence and auditable traceability across the data stream.

Building a Scalable Validation Pipeline for Real-Time Data

How can a validation pipeline scale to support continuous streams while preserving accuracy and auditability? A scalable architecture segments processing into modular stages, ensures idempotent operations, and leverages backpressure controls. Observability tracks call integrity and latency vs. accuracy tradeoffs, enabling adaptive throttling. Data schemas, streaming brokers, and replayable checkpoints maintain deterministic outcomes and traceable provenance across real-time validation workflows.

Conclusion

The validation framework consolidates cross-field checks, normalized formats, and synchronized timestamps to ensure incoming call data remains complete and correct. Provisional anomaly flags enable immediate responsiveness without disrupting flow, while late data is contextualized rather than rejected. Adaptive thresholds, traceable provenance, and continuous validation deliver measurable metrics on latency and accuracy, supporting deterministic, auditable integrity across real-time streams—an almost superhuman guarantee of data quality, ensuring stakeholders can trust every signal in motion.