Validate Incoming Call Data for Accuracy – 4699838768, 3509811622, 9108065878, 920577469, 3761752716, 4123879299, 2129919991, 5034367335, 2484556960, 9069840117

Data quality for incoming call records must be treated as a disciplined, quantitative process. Each field—caller, callee, timestamp, and duration—requires deterministic formats, immutable provenance stamps, and real-time syntax checks. Cross-field coherence, such as mutual alignment of caller and callee with consistent timestamps and valid durations, should be enforced at capture. Anomalies must be flagged for immediate correction, enabling traceable lineage and reliable metrics. The discussion ends with a clear invitation to explore concrete validation rules and governance controls.

What Makes Incoming Call Data Risky and Why Accuracy Matters

Incoming call data are prone to errors from multiple sources, including incomplete records, misentered numbers, duplicate entries, and timing discrepancies. This variability undermines data quality, complicating trend analysis and decision making.

Quantitative assessment reveals error rates, missingness, and inconsistency patterns. Systematic validation techniques reduce risk, preserving integrity while enabling reliable metrics and reproducible outcomes for downstream processes and stakeholder confidence.

4 Practical Validation Rules to Apply at Capture Time

A structured set of capture-time validation rules can markedly reduce data corruption by enforcing consistency before records enter the system.

The protocol specifies deterministic field formats, real-time syntax checks, and mandatory cross-field coherence to preserve call recording integrity.

Data lineage is tracked via immutable timestamps, versioned schemas, and auditable provenance, ensuring traceability, reproducibility, and accountable attribution throughout ingestion and storage.

How to Clean and Deduplicate Existing Call Records Efficiently

Cleaning and deduplicating existing call records requires a disciplined, data-driven approach that quantifies redundancy and removes it without compromising integrity. The process emphasizes reproducible metrics, robust matching rules, and staged consolidation. A clear deduplication strategy, anchored in call data quality benchmarks, minimizes false positives and preserves historical context while delivering auditable, scalable improvements across datasets.

Establishing Ongoing Data Hygiene: Governance, Metrics, and Automation

Establishing ongoing data hygiene requires a formal governance framework, clearly defined metrics, and automated controls that sustain data quality over time.

The approach quantifies roles, ownership, and accountability, aligning processes with data governance principles.

Continuous monitoring, anomaly detection, and scheduled audits measure data quality, driving corrective action.

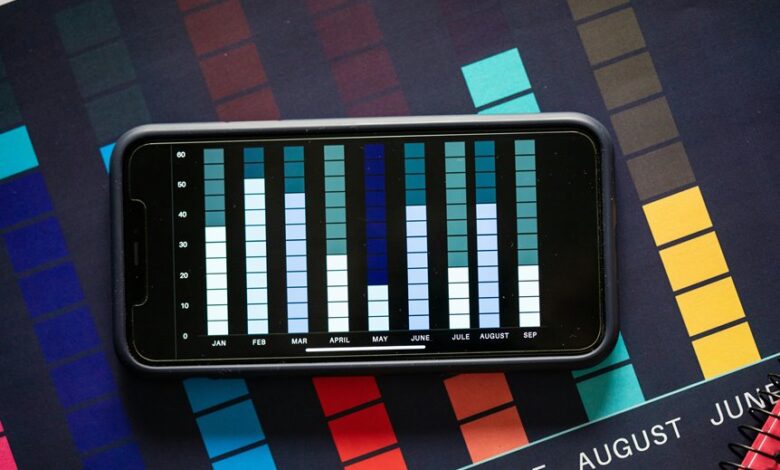

Transparent dashboards enable disciplined, freedom-friendly decision-making while ensuring scalable, repeatable hygiene across datasets and platforms.

Conclusion

The data quality framework for incoming call records emphasizes deterministic formats, real-time syntax checks, and immutable timestamps to ensure provenance. By enforcing cross-field coherence (caller, callee, timestamps, duration) and staged validation, anomalies are flagged for immediate correction, enabling reproducible lineage and trustworthy metrics. Given these rigorous controls, can ongoing governance and automated validation sustain accurate trend analysis across evolving datasets while preserving audit trails and ensuring data capture integrity? The answer is a disciplined yes.